This is an exact duplication of a post I just made over on Big Robot, there is also a lot more stuff over there, including videos of hulking stone monoliths and more details of the games we are working on. Go take a look..

The time has come to reveal a little more of how we are progressing with the world generation of Game 2 / Lodestone. There have been a lot of changes since the previous post I made on the procedural techniques we are using. As a result of various succesful (and failed) experiments the terrain/world engine is now much more flexible and producing some truly epic landforms. In this post I am going to outline some of the key elements of our current world engine.

VOXELS

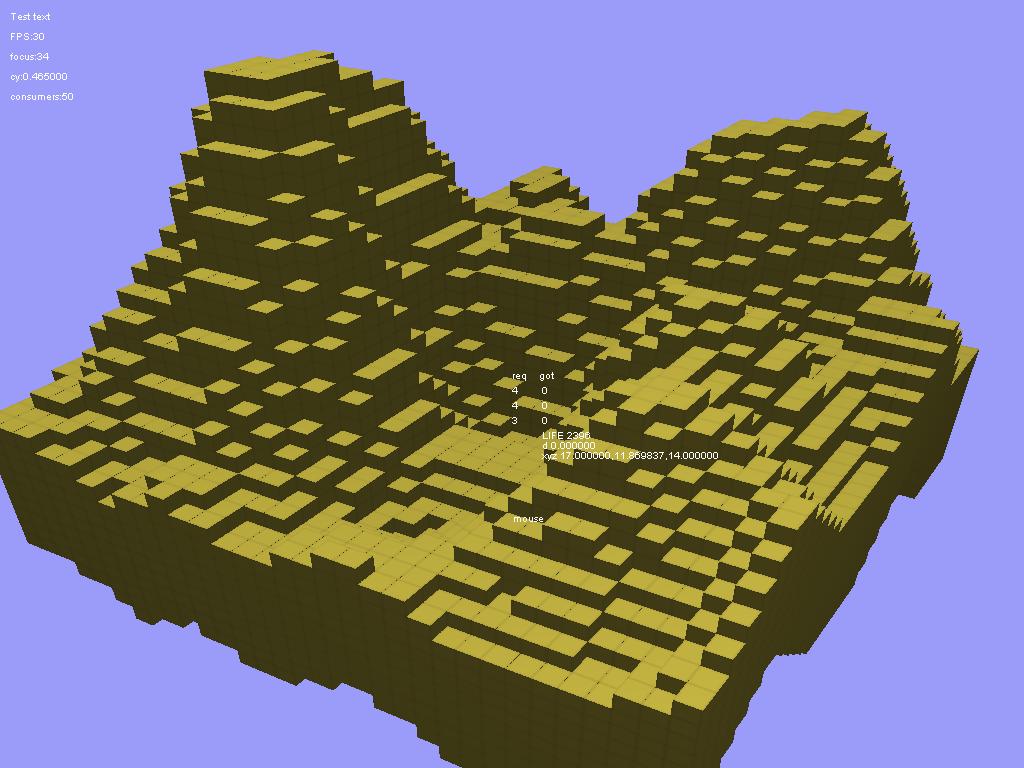

Well, everyone knows voxels are all the rage right now, but wait, we aren’t making a game with either ‘craft’ or ‘mine’ in the title! (Lodestone is about exploration, not construction.) Voxels are incredibly useful for storing 3D data, they are also ideal candidates for the application of procedural noise shaping functions. Voxel datasets can be quite memory hungry since they define forms as density clouds which record data for empty space as much as for solid objects, however they are very flexible and structurally formal. In previous 2D heightmap generation I used various noise functions to create interesting terrain, its fairly trivial to extend these functions to populate a 3D array instead of a flat matrix.

Of course we can’t just use a 3D density array directly or the game would look like a confusing field of stars. Most of the points in our array should actually be considered as empty, so we apply a threshold filter that essentially ignores any cells in our 3D array that are under a specific value. What we are left with is a set of solid cells that are within the threshold, this is essentially how cube world games like Minecraft operate.

Mapping voxel points in the 3D array to cubes is a nice and direct process that allows for quite fast generation and also for easy terrain modification (editing one point= editing one cube), but it inevitably leads to that distinctive boxy look. We wanted something a little different.

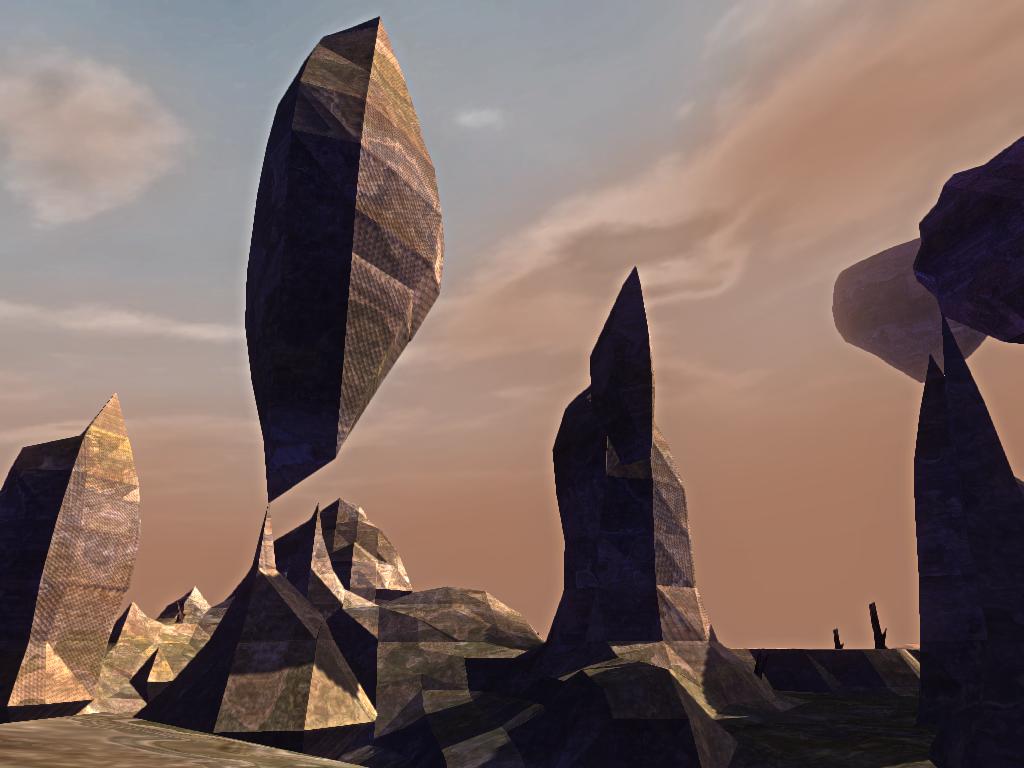

An alternative to using cubes is to ‘skin’ the density values in a polygon mesh. This leads to a more organic and flowing form, and is the route I took when translating our original 2D heightmap to a 3D space. There are various functions to produce polys from point clouds, most being related to the marching cubes algorithm. These functions take a 3D array of values and calculate the ‘surface’ of these values based on a specific threshold. Once a poly mesh is produced it can then be drawn like any 3D model in the game world. I had to tinker a little to calculate appropriate normals for the resulting mesh but soon came up against the nightmare problem of calculating UV texture co-ords for the polys.

TEXTURING

UV calculation is almost impossible, due to the constant size and directional changes of the polygons (unlike reliable cubes!) The best solution is to do all the texture mapping in a shader, using a combination of triplanar and noise based calculations. This applies the texture based on the normal of each texel, using the normal values to crossfade between different standard textures for each axis (x,y,z). The brilliance of this texturing technique is that you no longer have to worry what form the mesh makes, since it will always be textured consistently, without having to work out any UV co-ords. By adding some fractal distortion to the texture lookups in the shader you can also avoid obvious tiling artefacts and have control over the detail and scale of the final texture. There are a lot of tricks to producing interesting shaders for this approach (perhaps enough to justify another post entirely), but the result is worth it. Theres more info on this sort of texture mapping here.

SHAPING THE WORLD

Of course the actual shape of the polyon meshes produced by ‘skinning’ the voxels are dependent on how ‘interesting’ that data is. An unmodified noise function will produce a uniform distribution of forms with no consideration of axis. The actual aesthetic of the terrain comes from the various shaping functions that I developed to ‘carve out’ various selections of noise. A combination of layered octaves are used to modulate each other and a set of modifiers based on vertical position (the higher a point gets the smaller its density becomes) resulted in a nice range of resultant forms. You can use larger and larger scales of noise to mask out regions and superimpose other shaping functions on the basic data. This can help to eliminate the ‘same, same, but different’ feel of many procedural worlds.

I wasnt entirely satisfied by this though, because although it led to an interesting range of forms across a large distance, it meant you are still at the whim of the noise equation in terms of exactly when and where specific forms might appear. We want to be able to guarantee certain features or region types will occur within a particular distance. To accomplish this I wrote a secondary system that uses voronoi diagrams to generate a map of region types that are then used to directly effect the noise shaping functions. Each region type can dictate the form of the terrain, the distribution of resources and the type, frequency and difficulty of enemies. We can control the rarity and size of specific region types over a precise range (exactly 1 flat region per 100×100 units for example). The parameters for the region map are held in a template object and any world generation can be based on any template object, allowing us to produce a wide range of worlds with different distributions of region types.

INFINITY

The other aspect of the engine that has been both fascinating and frustrating is the fact that we are generating every world as an infinite plane. You can travel in any direction and the engine will keep making geometry until you reach the compuational limit of your machine. To accomplish this the world is split into regular chunks that are generated when your character moves and are discarded when they go out of range (or rather cached). The noise functions that shape each chunk are tied to the x,y,z co-ordinates of the world and therefore progress logically across any axis. Since the terrain is unmodifiable, we aren’t saving mesh data but regenerating it every time a new chunk is requested. I did try saving the data to speed up chunk generation when revisiting areas, but the filesizes were prohibitive (do you save mesh data, noise data, use RLE…) and the speed gain wasn’t worth it. Each world is based on a seed, which can be generated randomly or dictated, the seed is used for all the geometry and scenery so any player using the same seed will get the same world, same scenery, same enemies and so on.

Of course the world cant be stateless, we are trying to build something persistent. Therefore objects that arent static (consumed resources, enemies defeated etc) are saved per chunk to a world-seed savefile. This ensures that coming back to the same location will not regenerate objects that have been previously destroyed or removed.

More soon!

You can browse my other work over at Nullpointer.co.uk