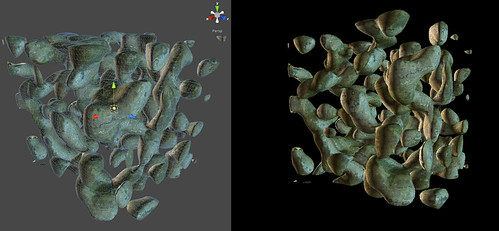

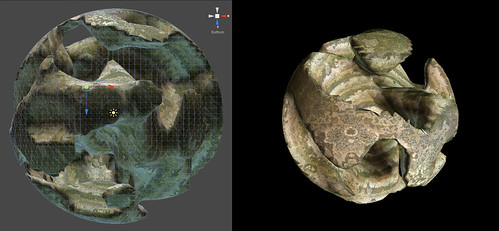

Still pursuing different methods of generating planetary/sculptural forms I realised that my previous cubic-sphere approach was incapable of producing caves or overhangs. I could of course add additional layers at overlapping altitudes etc, but how would i generate the actual data for these. With more than a few layers the calculations could get prohibitive and I might end up turning to a 3d noise array to produce the number of layers i want. I also found that some of my cubic-sphere code was wasting triangles, by assigning them inside other cells to allow for cliff generation (breaking out of the ‘pushed up’ mesh of a general heightfield map. While wondering what mesh function I could use to actually produce more overhangs, caves etc I came across voxel skinning.

I was familiar with the technique from oldskool metaballs demoscene stuff, but why not take the same approach to skinning an array of 3d terrain data. Essentially this approach meant changing the terrain heightmap into a 3d noise array, where each point is a density value. These values are then read by a recursive routine that works out the best polygon skin based on a threshold level (of what is in and what is out of ‘solidity’). So far im generating the noise array from a few octaves of simplex noise and some radial/planar modifiers. Its pretty much the same way games like minecraft generate a 3d solids map, except im skinning them with unique triangles rather than identical blocks.

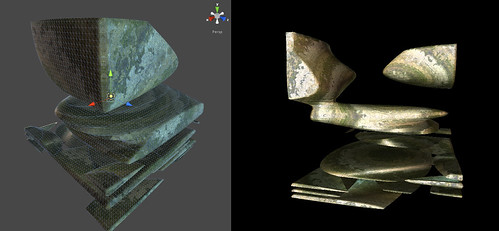

One of the major difficulties is how to texture the resulting surfaces. Since they are complex 3d geometry its impossible to simply axis splat them with a texture as I did with cubic spheres. The potenital uv unwrap of any of these fomrs would be incredibly complex to calculate in a trasitional way. The solution was to texture the object through a shader, using a ‘virtual ‘ texture. This texture is defined mainly by world space co-ordinates rather than the object points itself (in fact no uvs are ever used). I used a combination of two techniques:

1) A gradiant lookup texture, this simply picks a uv texel for the image based on the world co-ordinate. In a flat terrain this would mean maping the y axis to read the vertical axis of the source texture. As I was making spheres, i used the magnitude of the word co-ordinate. I then offset the texel selection both u &v by a sample of 3d noise.

2) Triplanar mapping, this produces 3 uv readings, one for each axis, x,y,z and then calculates the mix of these 3 mappings for any normal on the model. A normal ‘on top’ of the model would receive almost 100% of the y planar map, and other angles receive appropriate mixes of the 3 planes. This was also peturbed by a sample of 3d noise (to slightly distort the world normal of each point). I ended up using the triplanar technique to actually produce my 3d noise samples as the gfx cards im using dont support true volume textures.

Im quite pleased with the results and the voxel skinning is already done in chunks, so its extendable and capable of simple LOD if I want to expand the overall volume. However the biggest problem is likely to be one of storage/memory especially during generation, this is due to the fact that everything is now cubed, rather than squared in number. More detailed images are available on my flickr stream.